S3 to HDFS Sync App

Summary

Ingest and backup Amazon S3 data to hadoop HDFS for data download from Amazon to hadoop. This application copies files from the configured S3 location to the destination path in HDFS. The source code is available at: https://github.com/DataTorrent/app-templates/tree/master/s3-to-hdfs-sync.

Please send feedback or feature requests to: feedback@datatorrent.com

This document has a step-by-step guide to configure, customize, and launch this application.

Steps to launch application

-

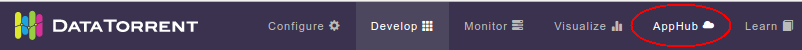

Click on the AppHub tab from the top navigation bar.

-

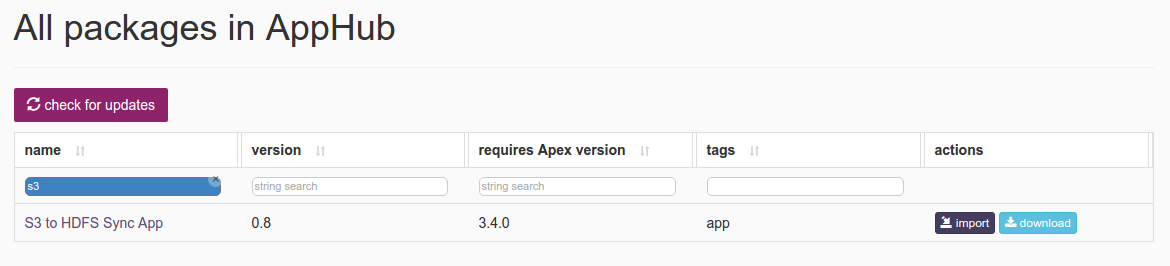

Page listing the applications available on AppHub is displayed. Search for S3 to see all applications related to S3.

Click on import button for

Click on import button for S3 to HDFS Sync App. -

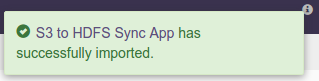

Notification is displayed on the top right corner after application package is successfully imported.

-

Click on the link in the notification which navigates to the page for this application package.

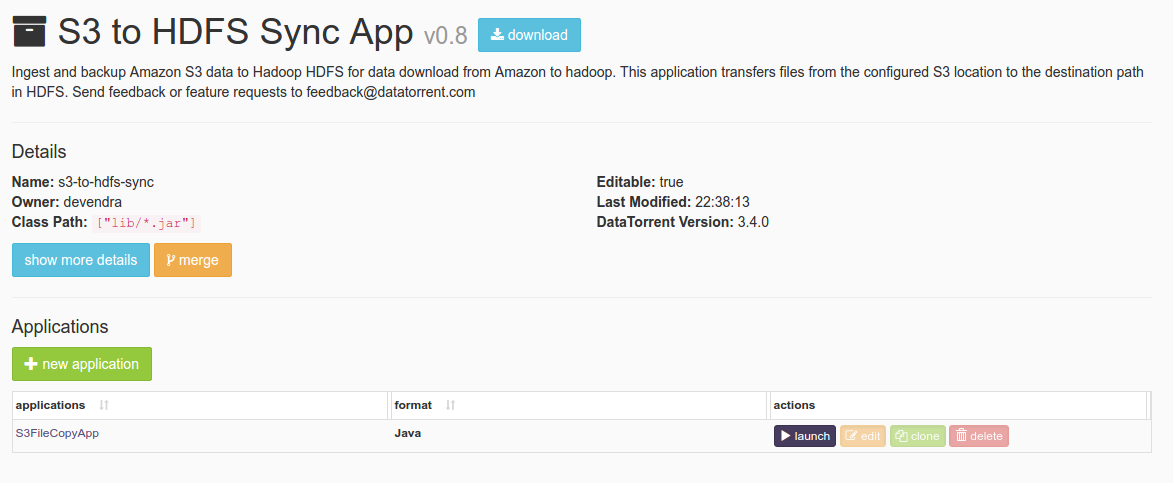

Detailed information about the application package like version, last modified time, and short description is available on this page. Click on launch button for

Detailed information about the application package like version, last modified time, and short description is available on this page. Click on launch button for S3-to-HDFS-Syncapplication. -

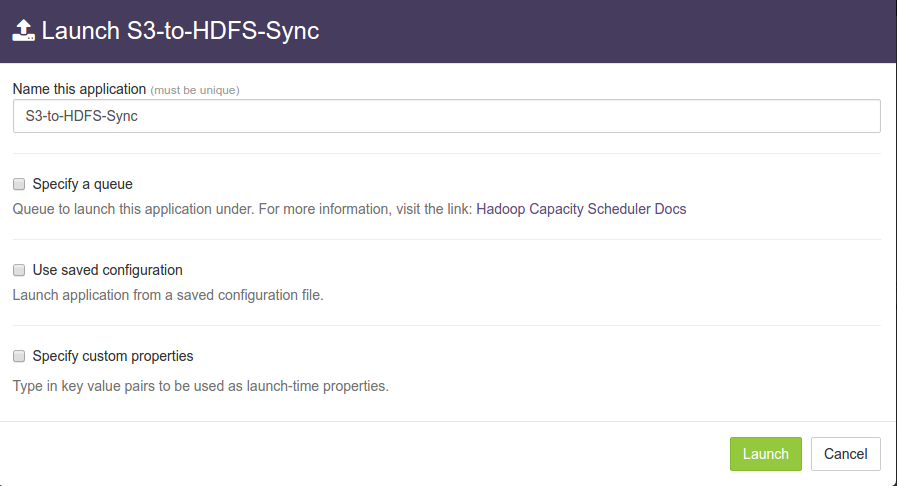

Launch S3-to-HDFS-Syncdialogue is displayed. One can configure name of this instance of the application from this dialogue.

-

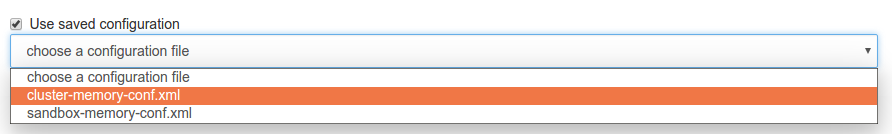

Select

Use saved configurationoption. This displays a list of pre-saved configurations. Please selectsandbox-memory-conf.xmlorcluster-memory-conf.xmldepending on whether your environment is the DataTorrent sandbox, or other cluster.

-

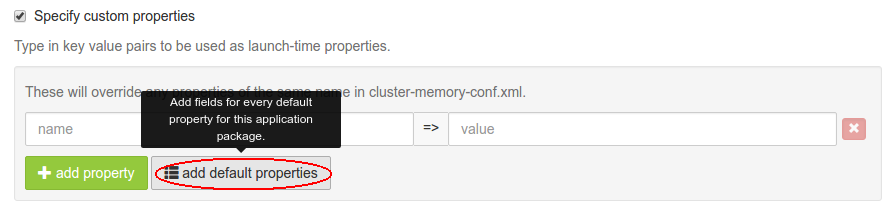

Select

Specify custom propertiesoption. Click onadd default propertiesbutton.

-

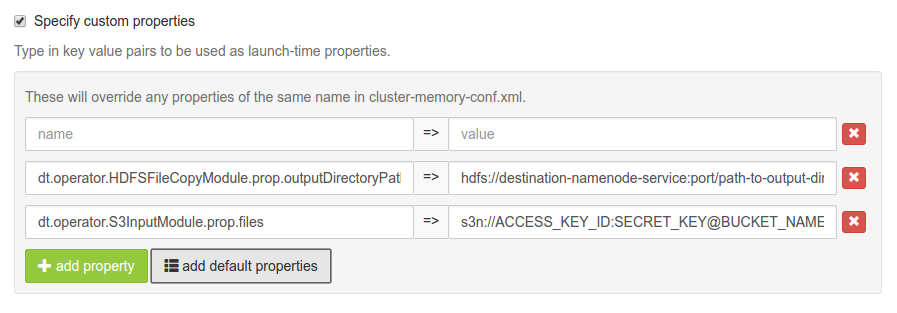

This expands a key-value editor pre-populated with mandatory properties for this application. Change values as needed.

For example, suppose we wish to copy from all files in

For example, suppose we wish to copy from all files in directory1fromcom.example.s3testusingACCESS_KEY_ID:SECRET_KEYcombination to/user/appuser/outputon the host cluster (on which app is running). Properties should be set as follows:name value dt.operator.HDFSFileCopyModule.prop.outputDirectoryPath /user/appuser/output dt.operator.S3InputModule.prop.files s3n://ACCESS_KEY_ID:SECRET_KEY@com.example.s3test/directory1 Details about configuration options are available in Configuration options section.

-

Click on the

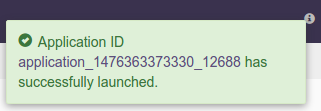

Launchbutton on lower right corner of the dialog to launch the application. A notification is displayed on the top right corner after application is launched successfully and includes the Application ID which can be used to monitor this instance and to find its logs.

-

Click on the

Monitortab from the top navigation bar.

-

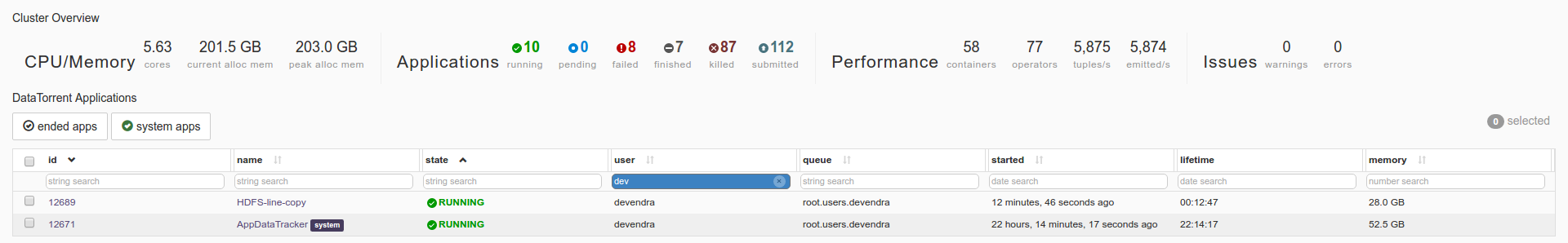

A page listing all running applications is displayed. Search for the current application based on name or application id or any other relevant field. Click on the application name or id to navigate to application instance details page.

-

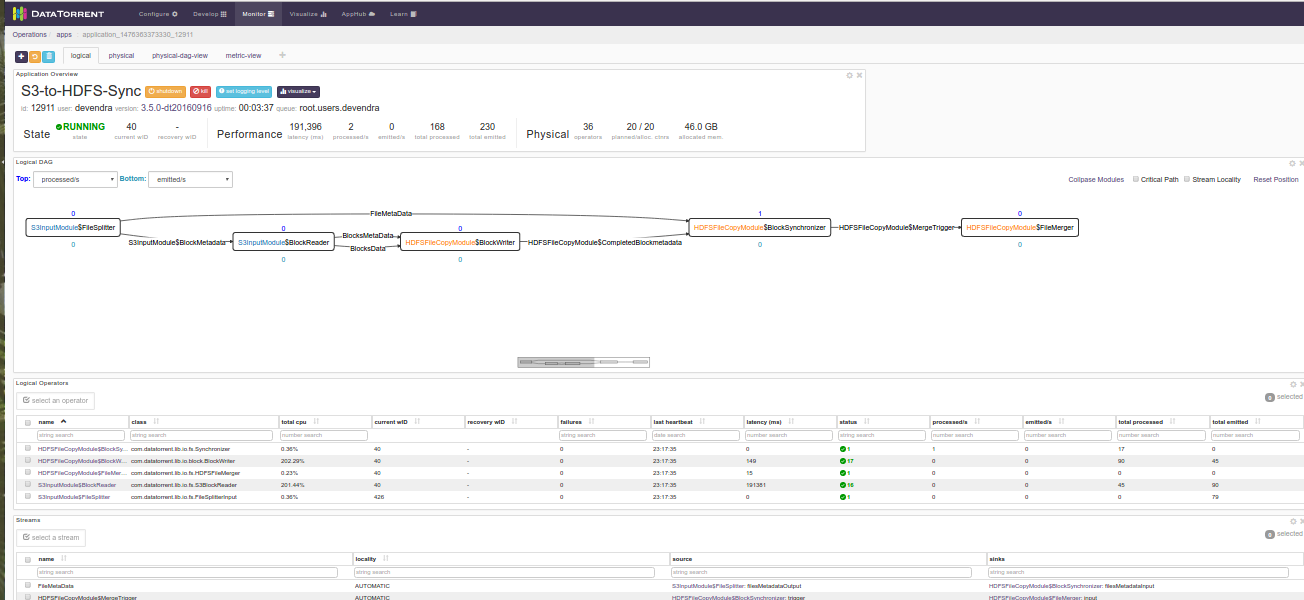

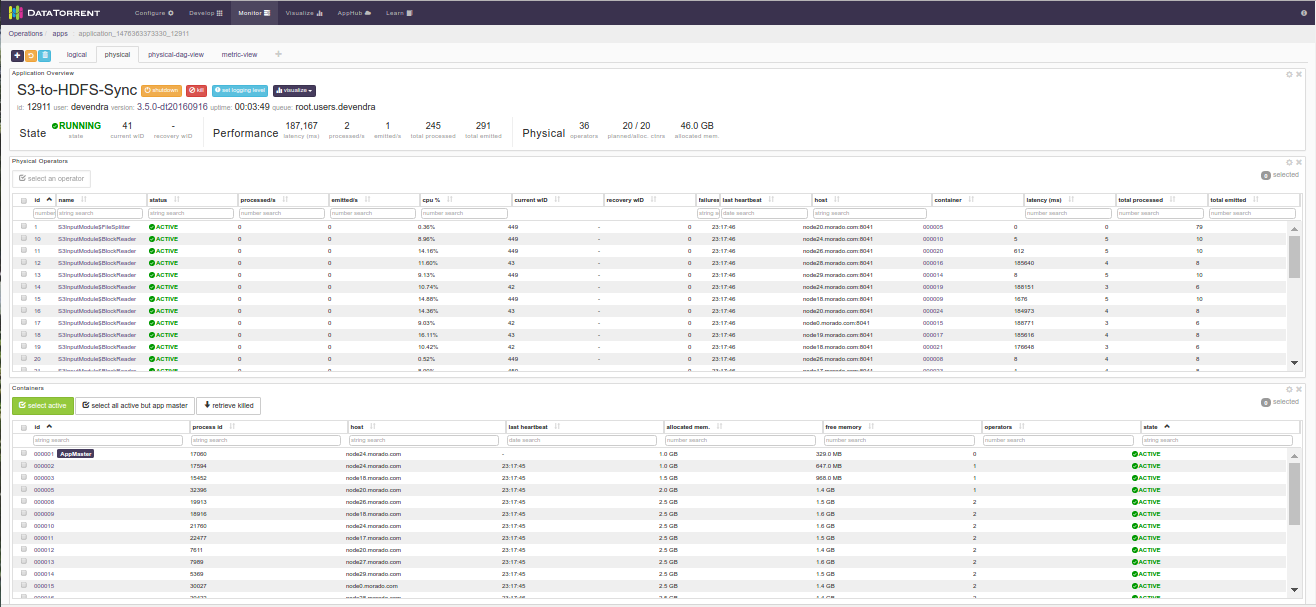

Application instance details page shows key metrics for monitoring the application status.

logicaltab shows application DAG, Stram events, operator status based on logical operators, stream status, and a chart with key metrics.

-

Click on the

physicaltab to look at the status of physical instances of the operator, containers etc.

Configuration options

Mandatory properties

End user must specify the values for these properties (these properties are all strings).

| Property | Description | Example |

|---|---|---|

dt.operator.HDFSFileCopyModule.prop.outputDirectoryPath |

HDFS path for destination directory |

|

dt.operator.S3InputModule.prop.files |

Access URL for S3 source | s3n://ACCESS_KEY_ID:SECRET_KEY |

Advanced properties

There are pre-saved configurations based on the application environment. Recommended settings for datatorrent sandbox edition are in sandbox-memory-conf.xml and for a cluster environment in cluster-memory-conf.xml.

| Property | Description | Type | Default for cluster- memory- conf.xml |

Default for sandbox -memory - conf.xml |

|---|---|---|---|---|

| dt.operator.S3InputModule.prop.maxReaders | Maximum number of BlockReader partitions for parallel reading. | int | 16 | 1 |

| dt.operator.S3InputModule.prop.blocksThreshold | Rate at which block metadata is emitted per second | int | 16 | 1 |

You can override default values for advanced properties by specifying custom values for these properties in the step specify custom property step mentioned in steps to launch an application.

Steps to customize the application

-

Make sure you have following utilities installed on your machine and available on

PATHin environment variable: -

Use following command to clone the examples repository:

git clone git@github.com:DataTorrent/app-templates.git -

Change directory to

examples/tutorials/s3-to-hdfs-sync:cd examples/tutorials/s3-to-hdfs-sync -

Import this maven project in your favorite IDE (e.g. eclipse).

-

Change the source code as per your requirements. This application is for copying files from source to destination. Thus,

Application.javadoes not involve any processing operator in between. -

Make respective changes in the test case and

properties.xmlbased on your environment. -

Compile this project using maven:

mvn clean packageThis will generate the application package with

.apaextension in thetargetdirectory. -

Go to DataTorrent UI Management console on web browser. Click on the

Developtab from the top navigation bar.

-

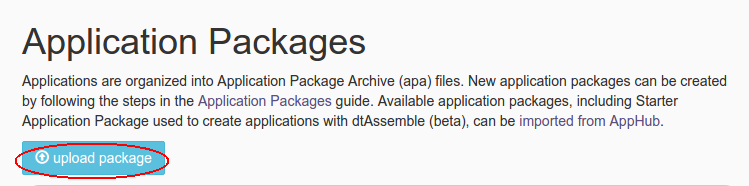

Click on

upload packagebutton and upload the generated.apafile.

-

Application package page is shown with the listing of all packages. Click on the

Launchbutton for the uploaded application package. Follow the steps for launching an application.